2022 Screenshot Update

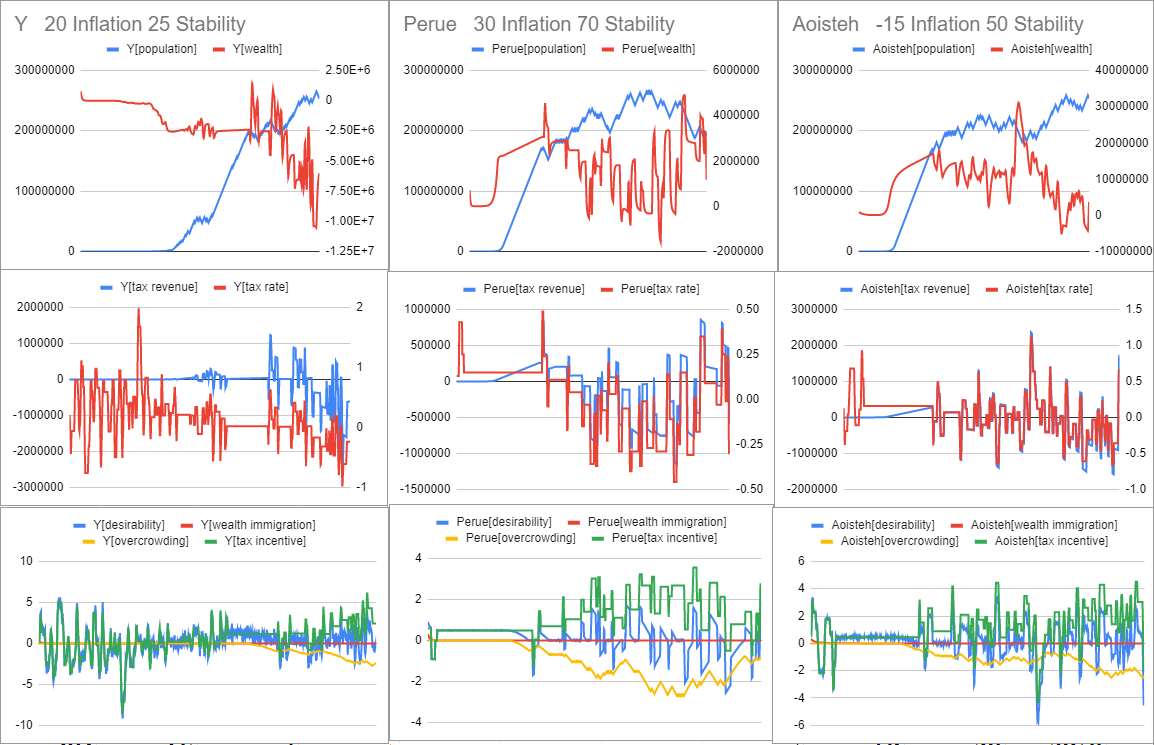

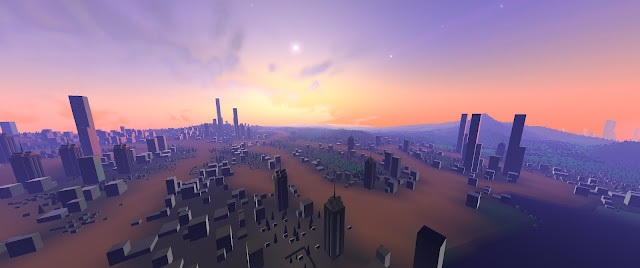

Work on the game is ongoing! Since my last posts about evolving cities, I've done a lot of work improving the game - especially the evolving cities, clouds and overall performance. I can now run the game in ultrawide resolution at 30fps on planet surfaces, which are the most costly scenes to render. This is a major achievement for my game engine, and will unblock the development of many features to come. Improvements to Evolving Cities There are many improvements to the evolving cities simulation. Road biomes (I haven't done road meshes yet) connect major installations, and residential / city zones are more likely to grow along these corridors. New installations are built in proximity to the headquarters. The roads are generated using an adaptation of the erosion code, in order to find the path-of-least-resistance. Cities evolve along road lines connecting major installations This city formed around a seam of crystals The Empir...