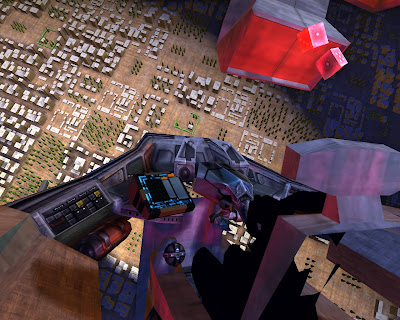

Lighting the cockpit, pt. 2

Changed exporters to read object name, and initialise the file/save dialog with the correct name. This should make it much quicker to export objects and also makesexplicit and easy the rule that you must save the mesh file to the same name as the object. i.e. As long as you name your object, export is as quick as pressing run exporter and OK. Added 4 lights into the cockpit. This runs slow! It's now doing 5 passes over the entire scene with shadow-mapped lights. Optimise the lighting - get lights to cull their drawCommands against both the camera and the light. Skipping doing any culling at the Python Scene level, done immediately in c++ Solution - LightingRenderChannel has lightCuller_ and cameraCuller_ these run lightculler then cameraculler . Bug - You can see black squares around the light frustum. Fix by turning off the AmbientLightingChannel. Looking into why meshes bound to AmbientLightingChannel are causing the lighting anomaly ambient_cube_map.vsh : Outputs the projec...